On Thursday, August 28, we discussed how much of the content on the Internet was AI, fake or generated automatically, and whether this was important to our daily lives. We started by watching this YouTube video presented by software engineer Vanessa Wingårdh.

Here’s a list of the chapters/topics covered in this video:

Chapters:

00:00 Intro

01:00 Dead Internet Theory

02:01 More Bots on the Internet than Humans

02:43 YouTube Bots

03:04 Bot Networks

03:40 TikTok Bots

04:07 Reddit Bots

05:21 X Bots

05:56 Meta AI Companion Bots

07:25 Facebook’s Farmville and Algorithms

08:46 Platforms as Walled Gardens

09:58 AI Slop is Taking Over

10:40 YouTube AI Generated Content

11:09 TikTok AI Generated Content

11:30 LinkedIn AI Generated Content

11:41 AI Generated Vogue Models

11:52 Dating Apps Using AI

12:45 Academia and AI

12:56 TikTok Bots Stealing YouTube Content

13:15 The Decline of Google Search

13:35 Google’s AI Overview

15:24 People Using ChatGPT As A Search Engine

15:58 Why Corporations Want A Dead Internet

17:54 What Happens When the Internet Dies?

This video highlights how automated replies (bots) and fake profiles are flooding social media with garbage and scams. This feeds into the “Dead Internet Theory” https://en.wikipedia.org/wiki/Dead_Internet_theory ,which suggests that this is part of a conspiracy run by “state actors” to control the behaviour of consumers. You could, however, look at it from another point of view, that the bots merely encourage users to click and “like” content to generate advertising revenue. Or in the case of scams, criminals use them to extract money from victims.

We looked at Facebook, and how it could be infested with bots. Chris suggested that the Javea Computer Club page (which is public) might have attracted a “bot attack” when we posted the headline of a JCC presentation entitled “Help – my Facebook account has been hacked!” – it received comments which were obviously from fake accounts, which, on reflection, are likely to have been “bots” rather than humans. Javea Connect is a closed Facebook group, with membership approval, so it should be relatively safe from fake accounts. However, it has over 31,000 members, which seems to be a lot to manage and approve!

Regarding Google’s AI overview, powered by Google’s Gemini AI, (discussed in the video at 13 minutes 15 seconds), Chris noted that she uses this tool to provide frameworks for Club discussions.

For this discussion, she asked Google : “How much of the information on the internet is ai generated?” and this was the answer:

There’s no consensus on the exact percentage, but recent studies indicate a significant and rapidly increasing amount of AI-generated content on the internet, with some suggesting around 57% of web content is AI-related, primarily as low-quality translations, and others noting that 74% of new web pages include AI content. This trend is leading to a negative feedback loop where AI models train on their own output, potentially degrading the quality and trustworthiness of the internet.

Evidence and Figures

Around 57% of web content:

Some studies, including one from Amazon Web Services (AWS) researchers, found that roughly 57% of all web-based text consists of AI-generated or translated content, often of poor quality.

High percentage of new pages:

A more recent study from Ahrefs (May 2025) found that 74% of new web pages contain AI-generated content.

Explosive growth:

Since the arrival of tools like ChatGPT, AI-generated content has surged, with one report noting an 8,000% increase.

Europol prediction:

A report from the European Union Agency for Law Enforcement Cooperation (Europol) warned that up to 90% of online content could be synthetically generated by 2026, though this is an estimate from an earlier period.

The Negative Feedback Loop

AI “eating” its own training data:

AI models are trained on vast amounts of internet data. As more AI-generated content floods the internet, these models begin to train on their own output, leading to a cycle where the quality of information can degrade over time.

Dominance of poor translations:

Much of the AI-generated content consists of poor-quality, multi-way parallel translations intended to capture ad revenue, particularly in less common languages.

Concerns

Decreased trustworthiness:

The prevalence of AI-generated content raises concerns about the accuracy and reliability of information online.

Impact on search engines and users:

A significant amount of this content is seen as noise, aimed at generating ad revenue rather than providing valuable information. This can make it harder for users to find trustworthy information and could potentially reduce traffic to human-created sites.

According to this AI summary, although the exact proportion is unknown, it appears that a significant portion of the information on the Internet is AI-generated or contains AI content, and this trend seems to be increasing. Much of this appears to be linked to low-quality translations.

(Note, re-running the same question a day later gave a different answer!)

Peter related how he was trying to do some astronomy research. While searching the Internet, he kept coming across articles giving watered-down explanations of the phenomenon he was studying. These were uncanny in their similarity and had the hallmarks of being AI-generated.

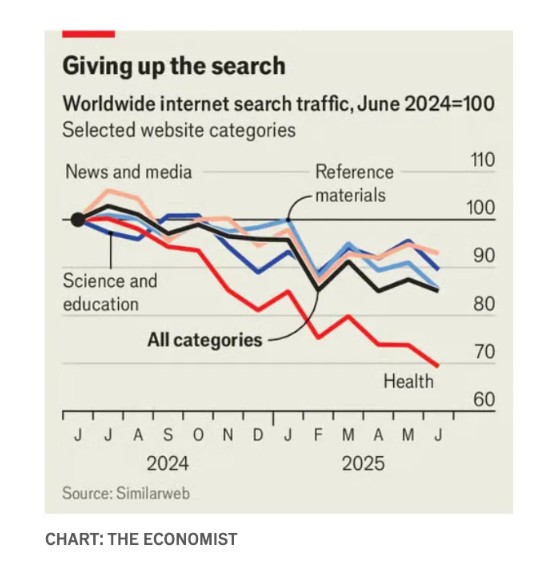

The widespread use of AI-generated summaries has cut the number of Internet searches. Few people bother to click on the small number of links given by the summaries and read the source articles, let alone take the time to “Google” the information they want.

This has profound knock-on effects as described in an article in The Economist: AI is killing the web. Can anything save it? https://www.economist.com/business/2025/07/14/ai-is-killing-the-web-can-anything-save-it . Many websites make money through advertisements or subscriptions. They therefore lose money if people don’t visit them. Wikipedia has suffered because its pages are summarised by AI, so fewer people visit the site. This, in turn, has led to a fall in the number of contributors – why bother writing a Wikipedia page if no one is going to read it? People are trying to find ways to get the AI companies to pay for the information they “scrape” from the internet, since content creators no longer receive revenue via advertising.

We discussed the accuracy of AI summaries. AI has a history of “hallucinating”, i.e. making things up. Such hallucinations can eventually be treated as fact, becoming the basis for further AI summaries. To know the truth, we should be skeptical and actively seek out accurate information. However, the average person is not going to bother with that, but will be content with what AI tells them. In addition, many will have had their ideas moulded and reinforced by bots and fake videos on social media.

Where should we seek the truth? Few people believe what Governments, scientists and mainstream media say these days and AI isn’t helping!

Perhaps community-driven projects will be able to use the infrastructure of the Internet to create alternatives to the World Wide Web which has become dominated by a few powerful companies, AI and machine generated bots. Time to bring back the humans!

Chris Betterton-Jones – Knowledge Junkie